OpenClaw’s integration of VirusTotal scanning reveals a critical shift in AI agent marketplace security. Discover how malicious ClawHub skills are detected and why AI marketplaces are prime targets for abuse.

The Growing Importance of AI Agent Marketplace Security

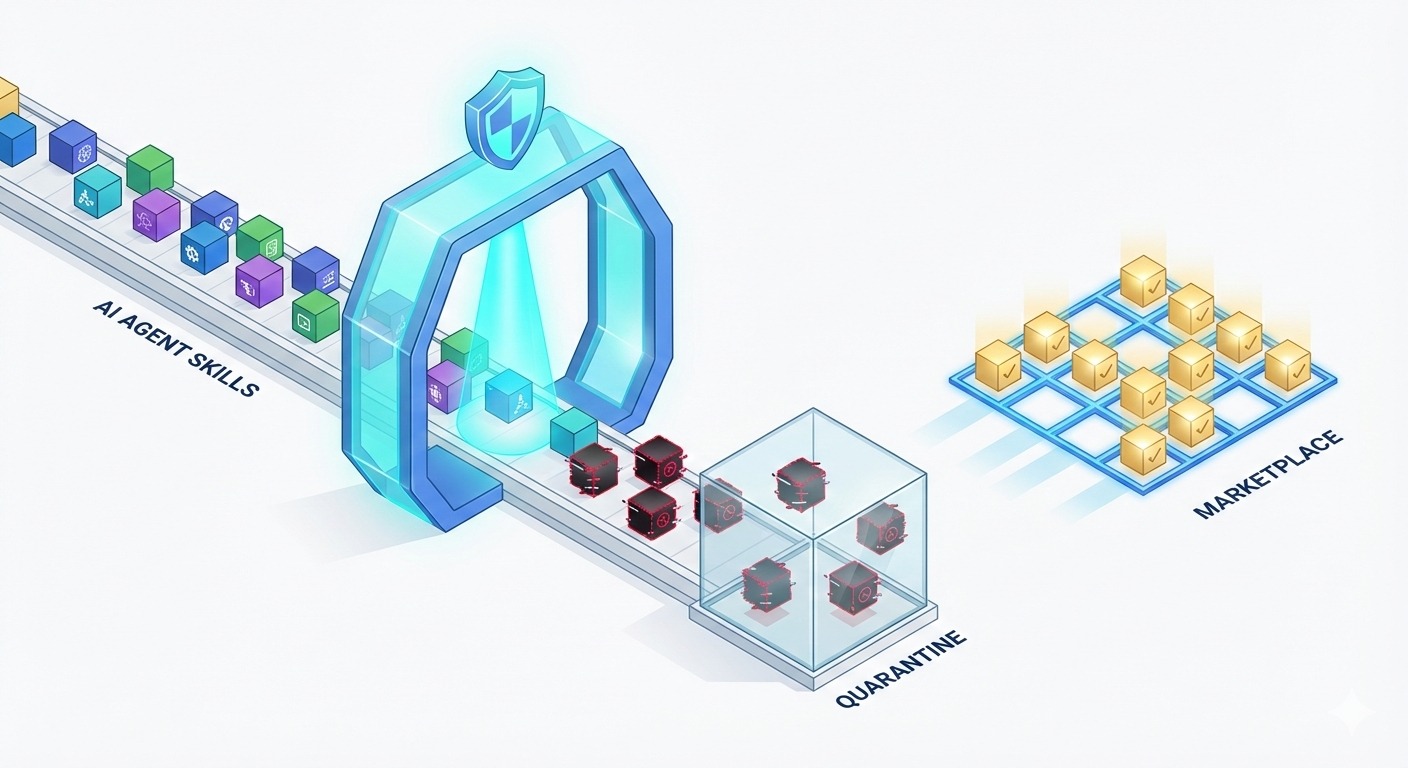

An AI agent marketplace is a digital platform where developers publish and distribute skills, tools, or extensions that autonomous AI agents can discover, integrate, and execute. It functions as an ecosystem that enables agents to expand their capabilities by accessing prebuilt functionalities, APIs, or task-specific modules that enhance their performance and autonomy.

As autonomous AI agents become more capable, the marketplaces that distribute their skills and extensions are becoming critical infrastructure. Platforms such as ClawHub, OpenClaw’s marketplace for agent skills, function much like app stores. They allow developers to publish capabilities that AI agents can download and execute.

However, this convenience introduces risk. If a malicious or poorly vetted skill is introduced into the ecosystem, it may be executed autonomously, potentially on a large scale. The integration of VirusTotal scanning into OpenClaw’s submission workflow reflects an understanding that AI agent marketplace security must evolve alongside agent capability.

“Marketplaces that distribute executable intelligence are part of the software supply chain,” said Julia Park, Head of Product Security at SecureAI Labs. “If you do not secure that distribution layer, you risk contaminating everything downstream.”

What OpenClaw’s VirusTotal Integration Actually Does

OpenClaw now scans submitted ClawHub skills using VirusTotal before they are approved for publication. VirusTotal aggregates threat intelligence from numerous antivirus engines and security vendors. When a file or artifact is uploaded, it is checked against known malware signatures, suspicious hashes, and behavioral indicators.

This process introduces a preventive control at the point of distribution. Instead of reacting after a malicious skill spreads, OpenClaw attempts to block threats before they reach users or autonomous agents.

Victor Nguyen, Director of Cloud Security at CyberTrust International, explained the significance of this approach: “Automated ecosystems require automated security controls. If submissions are published at machine speed, vetting must operate at machine speed as well.”

Why AI Agent Marketplaces Present Unique Risks

AI agent marketplaces fundamentally differ from traditional software platforms. Traditional software platforms distribute applications that humans download, install, and operate. The user decides what actions it performs and how its outputs are used.

AI agent marketplaces, however, distribute skills or capabilities that autonomous agents can discover, install, and execute independently. Instead of waiting for direct human input, these agents may act, interact, and make decisions on their own.

When agents retrieve and execute third-party skills, they may perform actions, access APIs, or process data without direct human oversight. A compromised skill could manipulate data flows, exfiltrate information, or trigger unintended behaviors across interconnected systems.

Mark Taylor, Chief Research Officer at the Open Source Security Foundation, has emphasized the importance of supply chain integrity in modern ecosystems. “Trust in digital ecosystems depends on knowing that what you install is what you expect,” he noted. “As AI agents begin installing and executing skills on their own, that trust boundary becomes even more critical.”

The Moltbook incident and similar AI platform vulnerabilities have already demonstrated how quickly misconfigurations or exposed artifacts can undermine confidence in new technologies. OpenClaw’s integration signals an attempt to avoid similar pitfalls.

The Limits of Static Malware Scanning

While VirusTotal scanning is a strong baseline control, it is not a complete solution. Static analysis tools primarily detect known threats by comparing artifacts against databases of malicious signatures and threat indicators.

Samantha Reid, AI Safety Lead at NextGen Secure Systems, cautioned against overreliance on any single control. “Static scanning identifies known malware patterns,” she said. “But sophisticated adversaries may design skills that behave maliciously only under certain runtime conditions. That requires dynamic observation.”

For AI agent marketplaces, this means that scanning should ideally be paired with sandbox testing, runtime monitoring, and behavioral analytics. Defense in depth remains relevant even within curated marketplaces.

Building Trust Through Proactive Security

OpenClaw’s move reflects a broader shift in how AI platforms approach governance and accountability. Rather than assuming developer goodwill, the platform embeds verification into its publishing pipeline. This approach aligns with modern DevSecOps practices, where security checks are integrated directly into development workflows.

By adopting VirusTotal scanning, OpenClaw demonstrates that AI agent marketplace security is not merely about innovation but also about stewardship. Automated ecosystems require structured oversight mechanisms to ensure safety and reliability.

Industry observers note that such measures may soon become baseline expectations rather than differentiators. As AI marketplaces expand, users and enterprises will likely demand transparency regarding how skills are vetted, scanned, and monitored.

The Broader Implications for AI Ecosystems

The integration of malware scanning into ClawHub highlights a larger trend. AI agent ecosystems are beginning to adopt established cybersecurity best practices from traditional software supply chains. Concepts such as artifact scanning, integrity verification, and threat intelligence sharing are being repurposed for autonomous environments.

The challenge ahead lies in scaling these controls effectively. AI agents operate at speeds and volumes that can amplify both innovation and harm. Ensuring that marketplaces implement layered protections will be critical to sustaining trust in these systems.

OpenClaw’s VirusTotal integration does not eliminate all risk, but it sets an important precedent. It acknowledges that AI agent marketplaces are part of the broader digital supply chain and must be secured accordingly.

Conclusion

As AI agents become more autonomous and capable, the platforms that distribute their skills carry increasing responsibility. OpenClaw’s integration of VirusTotal scanning represents a proactive step toward strengthening AI agent marketplace security. By embedding threat detection into its publishing process, the company reinforces a key principle of modern cybersecurity: innovation must advance alongside robust, preventive safeguards.

In emerging AI ecosystems, trust is earned through visible and verifiable security controls. OpenClaw’s approach demonstrates how marketplaces can balance openness with protection, ensuring that the growth of AI agents does not come at the expense of safety.

One response to “Securing AI Agent Marketplaces: A Closer Look at OpenClaw’s VirusTotal Scanning”

Awesome.